Examples Across Industries

Competency-Based Assessment is versatile. Because it measures real performance in context, it can be applied in regulated sectors, corporate learning, and education alike. Across industries, the same principle holds true: competence is proven not by theory alone, but by what people can reliably do.

- Healthcare: Nurses assessed on infection-control practices during patient care. Competence is judged by consistent adherence to protocols, not just memorising them.

- Aviation: Pilots tested through flight simulators that recreate crisis scenarios. What matters is decision-making under pressure, not theoretical recall.

- Corporate Training: Managers evaluated on leading conflict-resolution sessions, where both process and outcome provide evidence of capability.

- Education: Students assessed through project-based tasks, demonstrating how they apply theory to practical, real-world challenges.

These examples show that CBA can be applied in high-stakes professions, leadership development, and even classroom settings.

These approaches also connect with how individuals prefer to learn. For example, understanding Honey and Mumford’s Learning Styles can help educators design competency assessments that suit diverse learners.

Benefits of Competency-Based Assessment

- Relevance: Aligns learning with tasks employees or students will face in practice.

- Compliance: Provides auditable records that satisfy regulators and accreditation bodies.

- Development: Encourages learners to see assessment as part of growth, not just a hurdle.

- Return on investment: Organisations can track workforce readiness and identify training gaps.

- Confidence: Learners gain assurance that they are meeting professional standards.

Each of these benefits depends on well-designed systems, which is why CBA is often most successful when supported by digital tools that streamline evidence collection and reporting.

Challenges and How to Address Them

- Time and resources. Designing frameworks and training assessors takes investment. Starting with small pilots helps manage this.

- Assessor bias. Even with rubrics, judgments can be inconsistent. Therefore, regular calibration sessions improve reliability.

- Scalability. As programs grow, maintaining consistency becomes harder. Digital competency tracking systems can help standardise assessments across teams.

- Superficial design. Tasks that appear authentic may still fail to capture real complexity. Embedding multiple evidence types and scenario-based tasks reduces this risk.

Best Practices for 2025

- Blend quantitative rubrics with qualitative narratives for richer evidence.

- Encourage self and peer assessment alongside assessor judgment.

- Use technology such as digital training matrices to centralise data and reporting.

- Refresh competency frameworks every 2–3 years to reflect evolving skill demands.

- Always integrate feedback loops so assessment drives improvement, not just certification.

A free Self-Assessment Checklist (Docx) is available to support this reflective element. The interactive tick-box tool allows individuals to review their competencies in areas such as communication, teamwork, problem-solving, and leadership.

Download here

Embedding structured reflection is equally valuable. Schön’s Reflective Practice shows how self-assessment and feedback can transform assessment into continuous professional growth.

FAQs

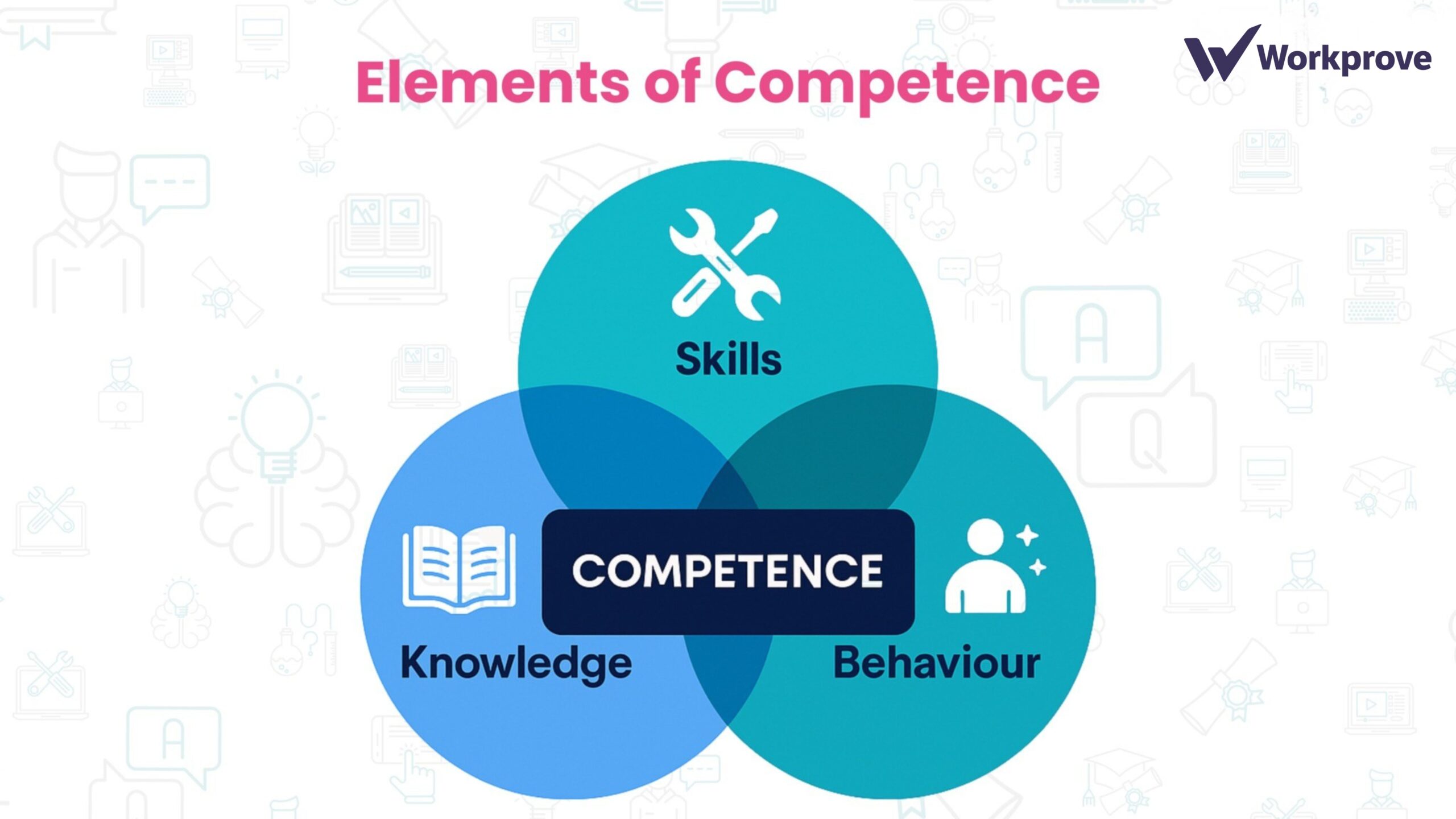

How is competency assessment different from skills testing?

Skills testing often isolates single abilities (e.g., typing speed), while competency assessment evaluates the integration of knowledge, behaviour, and skills in context.

Can it measure soft skills like communication?

Yes, when these are broken into observable behaviours such as “listens actively,” “acknowledges others’ perspectives,” or “manages disagreement calmly.”

Is it suitable outside regulated industries?

Absolutely. Many companies use competency-based models for onboarding, leadership development, and performance management, even where compliance is not a legal requirement.

Conclusion

Competency-Based Assessment is not just a trend; it’s becoming the standard for ensuring learners and employees are truly ready for the demands of their roles. Recent studies confirm its potential for enhancing skills and confidence, but also highlight the need for careful design, consistent assessor training, and genuine authenticity in tasks.

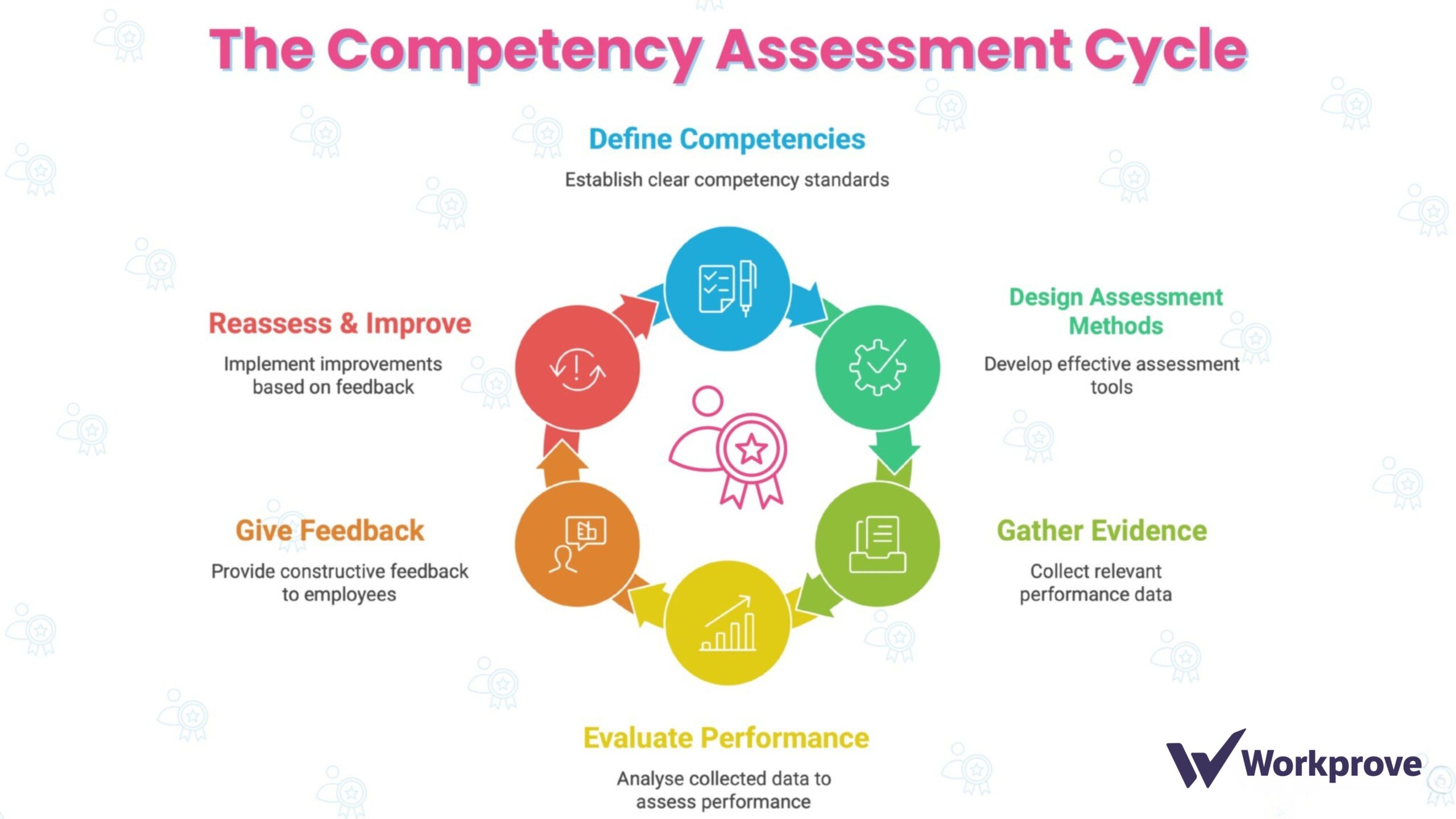

If you are just starting out, begin small: identify one or two key roles, design a competency matrix, and test it with a pilot. Use the feedback to refine and scale. And remember, CBA is most powerful when treated not as a gatekeeper, but as a continuous cycle of growth.

Download both the Competency Matrix Template and the Self-Assessment Checklist to build a complete CBA toolkit.

And when you’re ready to scale beyond spreadsheets, explore how the Moralbox Training Matrix can simplify competency tracking across your organisation.

References

Alt, D., Naamati-Schneider, L. and Weishut, D.J.N. (2023) ‘Competency-based learning and formative assessment feedback as precursors of college students’ soft skills acquisition’, Studies in Higher Education, 48(12), pp. 1901–1917. Available at: https://eric.ed.gov/?id=EJ1404213

Velasco-Martínez, L.-C. and Tójar-Hurtado, J.-C. (2018). Competency-Based Evaluation in Higher Education—Design and Use of Competence Rubrics by University Educators. International Education Studies, 11(2), p.118. Available at:https://www.ccsenet.org/journal/index.php/ies/article/view/70741

Fawns, T., Bearman, M., Dawson, P., Nieminen, J.H., Ashford-Rowe, K., Willey, K., Jensen, L.X., Damşa, C. and Press, N. (2024) ‘Authentic assessment: from panacea to criticality’, Assessment & Evaluation in Higher Education. doi:10.1080/02602938.2024.2404634. Available at: https://www.tandfonline.com/doi/full/10.1080/02602938.2024.2404634